Pendo found that 80% of features in the average software product are rarely or never used. Publicly traded cloud software companies collectively spent up to $29.5 billion building those features. That figure does not account for the internal cost of debates, roadmap reshuffles, and engineering morale spent on work that users ignore. Product teams know they need to prioritize. Most of them use a framework to do it. And most of them still get it wrong, because the numbers they feed into those frameworks are invented at a whiteboard rather than gathered from the people who will use the product.

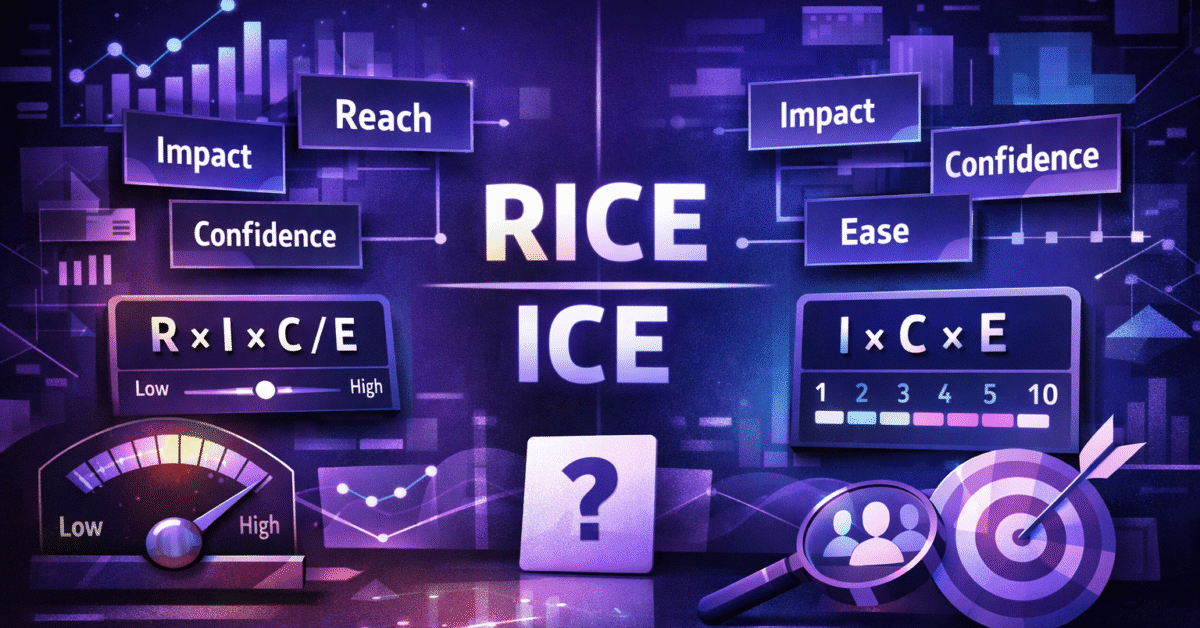

RICE and ICE are 2 of the most common prioritization scoring models in product management. Both produce a single number that ranks features against each other. Both rely on estimates for their inputs. And both collapse into theater when those estimates are based on internal conviction rather than evidence. What separates a useful RICE or ICE score from a misleading one is not the math. It is the quality of information behind each variable. This article breaks down both frameworks, shows where each one fails without research, and explains how to feed validated data into every scoring component so that your roadmap holds up under scrutiny.

How the RICE Framework Works

Intercom built RICE from first principles after testing and iterating on several internal scoring methods. The 4 factors are Reach, Impact, Confidence, and Effort. The formula multiplies Reach by Impact by Confidence, then divides by Effort.

Reach

Reach measures how many people a feature will affect within a set time period. Intercom’s team framed it as “how many customers will this project impact over a single quarter?” The recommendation is to use real product metrics wherever possible rather than guessing.

Impact

Because Impact is hard to pin down with precision, Intercom uses a multiple-choice scale. A score of 3 means massive impact, 2 is high, 1 is medium, 0.5 is low, and 0.25 is minimal. These values scale the final score accordingly.

Confidence

This is where the framework gets interesting. Intercom’s own documentation provides specific examples. A project backed by quantitative metrics for reach, user research for impact, and an engineering estimate for effort receives 100% confidence. A project where reach and effort have data support but impact is uncertain gets 80%. A project running mostly on assumptions gets 50%.

The gap between 100% and 50% confidence halves the priority score. That is not a rounding error. It determines what gets built and what sits in the backlog for another quarter.

Effort

Effort is estimated in person-months and accounts for design, engineering, and any other work required to ship. It sits in the denominator, so higher effort pushes the score down.

Atlassian has noted that RICE can be time-consuming and cumbersome, particularly when multiple items require data and validation from several sources. Methods for determining each factor can also change over time, which introduces subjectivity and inconsistency.

How the ICE Framework Works

Sean Ellis, who coined the term “growth hacking,” created ICE as what his team at GrowthHackers called a “minimum viable prioritization framework.” Each item gets a score from 1 to 10 for Impact, Confidence, and Ease. Multiply the 3 numbers together, and you have the ICE score.

The biggest advantage is speed. Decisions happen fast, and for teams running rapid experiments, that matters. The main criticism, though, is subjectivity. Newer growth teams with little historical data will struggle to assign accurate Impact or Confidence scores.

RICE improved on ICE by adding Reach. Without that factor, ICE can push teams toward features that excite a small group of users while overlooking opportunities that serve thousands. The Reach component forces a conversation about audience size that ICE skips entirely.

The fact that a low Ease score drags down an ICE score so easily reveals the model’s roots in growth hacking, where failing fast is the goal and teams avoid sinking large amounts of time into any single project. But some high-impact work requires a larger investment. Relying on ICE alone can keep a team chasing low-hanging fruit while bigger opportunities go unaddressed.

Where Both Frameworks Break Without Research

Both models share the same vulnerability in Confidence. In RICE, Confidence multiplies against Reach and Impact before the division by Effort. In ICE, Confidence is 1 of only 3 factors, which means it controls a full third of the output. When teams assign Confidence based on internal opinion, the scoring exercise becomes performative.

RICE scoring requires estimations, and those estimations can be inaccurate. Team members can inflate scores to match their enthusiasm for a particular idea, sometimes intentionally and sometimes not. ICE has the same problem. Results vary depending on who you ask and when you ask them.

The downstream effects are predictable. Between 66% and 90% of features fail to deliver expected value. 23% of product development investments fail due to unclear company strategies. McKinsey research shows over 50% of product launches miss their business targets. Over 40% of companies do not collect feedback from end users at all.

Teams do not lack frameworks. They lack the evidence that makes those frameworks function properly.

How Research Inputs Improve Each Score

When you break down each scoring component, the role of user research becomes hard to ignore.

Feeding Reach With Real Data

Intercom recommends using actual product metrics for Reach. Their example: 500 customers reach a point in the signup funnel each month, 30% choose a specific option, and that yields a reach of 450 customers per quarter. Without analytics showing actual funnel behavior and segment sizes, Reach is a fabrication.

For newer products or untested market segments where behavioral data does not yet exist, marketing research methodology can help estimate market size and likely adoption. But those estimates still need grounding in some form of audience data, not a round number pulled from a planning meeting.

Feeding Impact With Qualitative Evidence

Interview findings, usability test results, and behavioral patterns reveal not only how many users will encounter a feature but how much it will matter to them. In 1 scoring example, a team assigned an Impact score of 3.0 and a Confidence of 90% because they had extensive user interviews, support tickets, and session recordings showing users struggling with current onboarding. Compare that to a team guessing that onboarding “might be important.” The scoring posture is completely different.

Feeding Confidence With Proof

Atlassian recommends using Confidence to debate why 1 idea has higher certainty than another, then updating those estimates as teams learn more about a customer problem. The better framing for Confidence is not “How confident are you?” but “How much evidence do you have that makes you confident?” That reframe forces honesty. It separates gut feeling from tested knowledge.

The Timing Problem

The gap between “research is valuable” and “research is practical” is where most frameworks fall apart in sprint environments. The RICE framework brings Confidence into the conversation explicitly, and that helps teams identify which ideas need more investigation. But if “more investigation” means a 3-week research cycle, the team either waits and loses momentum, or ships with low Confidence and absorbs the risk.

ICE works well when the problem is momentum. If a team is stuck debating small bets or quick improvements, ICE reduces overhead. But moving fast with low Confidence is how teams contribute to that $29.5 billion in wasted feature spend.

The operational challenge is compressing validation into sprint timelines so that Confidence scores are backed by something before planning commits resources.

How Evelance Fits Into the Scoring Workflow

This timing gap is where predictive user research closes the loop. At Evelance, tests complete in minutes rather than weeks. The platform provides access to over 1,000,000 predictive personas filtered by demographics, professions, behaviors, and psychological profiles, and teams can describe any niche segment and generate matching audiences without panel limitations.

That segmentation capability feeds directly into the Reach component of RICE. If you are estimating how many users a feature will affect, testing across precise segments like working mothers who use healthcare apps or senior executives who prefer desktop interfaces produces far sharper Reach numbers than broad demographic assumptions.

Evelance delivers results 85% faster than traditional user research, going from setup to usable insights in 10 minutes across over 100 data points and scores. The platform measures 12 psychological scores, including Interest Activation, Credibility Assessment, and Action Readiness. These map directly to Impact scoring. A feature with high Interest Activation and Action Readiness among its target audience supports a higher Impact rating. A feature with low Credibility Assessment scores signals that the concept needs rework before it earns engineering resources.

Teams report finding 40% more insights when live sessions explore pre-validated designs rather than discovering fundamental problems from scratch. That efficiency gain feeds back into prioritization accuracy. Every insight surfaced before scoring begins produces a more trustworthy RICE or ICE calculation.

The hybrid approach works like this: teams run initial validation through predictive models, then focus live interviews on the specific issues that surface. This preserves the depth of human sessions while compressing validation cycles to fit sprint timelines.

Choosing Between RICE and ICE

The comparison is not about which framework is superior. It is about matching the framework to the decision type.

RICE requires medium complexity and works best with usage data combined with estimates. It fits teams of 5 to 50+ members and is suited for ranking a large backlog with some rigor. ICE requires low complexity and estimates only, fits teams of 2 to 20, and works best for quick ranking when data is limited.

A common hybrid approach uses Kano analysis for discovery to identify which problems and features matter most, RICE for roadmap prioritization, and ICE for experiments and quick wins. Most teams move from ICE to RICE to custom weighted scoring as their data maturity grows. That progression tracks directly with research capability. As teams accumulate more validated evidence, the additional granularity of RICE becomes more valuable because there is real data to populate each component.

Practical Application

A team evaluating 3 features for the next quarter might assign Confidence scores of 70% to 80% based on internal conviction. With research, the picture shifts.

- Feature A tests against a predictive audience matching its target user profile. The design surfaces friction points the team missed. Confidence drops, Impact is revised downward, and the RICE score falls.

- Feature B, originally a secondary priority, tests well with a specific user segment. The behavioral pattern driving demand turns out to be stronger than estimated. Both Confidence and Impact increase. In RICE, Reach is also validated because the segment data confirms how many users actively exhibit the relevant behavior. The feature moves up.

- Feature C shows mixed signals. Strong appeal among power users but confusion among new users. The team now has precise data to refine the feature scope, potentially reducing Effort while maintaining Impact for the higher-value segment.

What Holds the Whole Thing Together

Atlassian has acknowledged that historically, priorities at many organizations were based largely on gut feel, leading to passionate discussions built on assumptions. Their recommendation is to do data-gathering homework before any meeting where priorities are set.

Data-driven product teams are 2.9 times more likely to launch products that meet business goals. RICE and ICE are sound frameworks. Their accuracy depends entirely on the quality of inputs. Without user research, Confidence scores are opinions, Impact scores are hopes, and Reach estimates are projections. With rapid, targeted validation built into the scoring workflow, these frameworks deliver on their original promise: objective, defensible prioritization that puts engineering resources behind the features users actually need.

Mar 19,2026

Mar 19,2026